NeAT: Neural Adaptive Tomography

Darius Rückert, Yuanhao Wang, Rui Li, Ramzi Idoughi, Wolfgang HeidrichACM Transactions on Graphics (Proc. SIGGRAPH), 41 (4), 1-13, 2022

Abstract

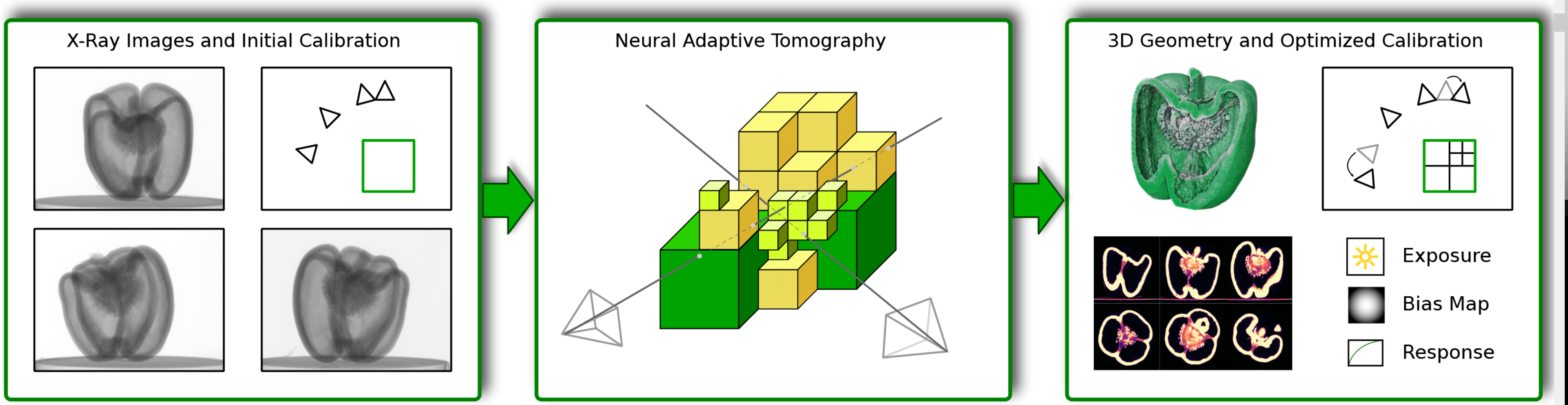

In this paper, we present Neural Adaptive Tomography (NeAT), the first adaptive, hierarchical neural rendering pipeline for multi-view inverse rendering. Through a combination of neural features with an adaptive explicit representation, we achieve reconstruction times far superior to existing neural inverse rendering methods. The adaptive explicit representation improves efficiency by facilitating empty space culling and concentrating samples in complex regions, while the neural features act as a neural regularizer for the 3D reconstruction.The NeAT framework is designed specifically for the tomographic setting, which consists only of semi-transparent volumetric scenes instead of opaque objects. In this setting, NeAT outperforms the quality of existing optimization-based tomography solvers while being substantially faster.

Neural Adaptive Tomography uses a hybrid explicit-implicit neural representation for tomographic image reconstruction. Left: The input is a set of X-ray images, typically with an ill-posed geometric configuration (sparse views or limited angular coverage). Center: NeAT represents the scene as an octree with neural features in each leaf node. This representation lends itself to an efficient differentiable rendering algorithm, presented in this paper. Right: Through neural rendering NeAT can reconstruct the 3D geometry even for ill-posed configurations, while simultaneously performing geometric and radiometric self-calibration.

Paper

paper [Rückert_2022_NeAT.pdf]

Code and Dataset

Source code [Source code]

Dataset [External link]

Citation

@article{10.1145/3528223.3530121,

author = {R\"{u}ckert, Darius and Wang, Yuanhao and Li, Rui and Idoughi, Ramzi and Heidrich, Wolfgang},

title = ,

journal = {ACM Trans. Graph.},

volume = {41},

number = {4},

year = {2022},

doi = {10.1145/3528223.3530121},

publisher = {Association for Computing Machinery}

}