Stochastic Convolutional Sparse Coding

Jinhui Xiong, Peter Richtárik, Wolfgang HeidrichThe International Symposium on Vision, Modeling, and Visualization, 2019

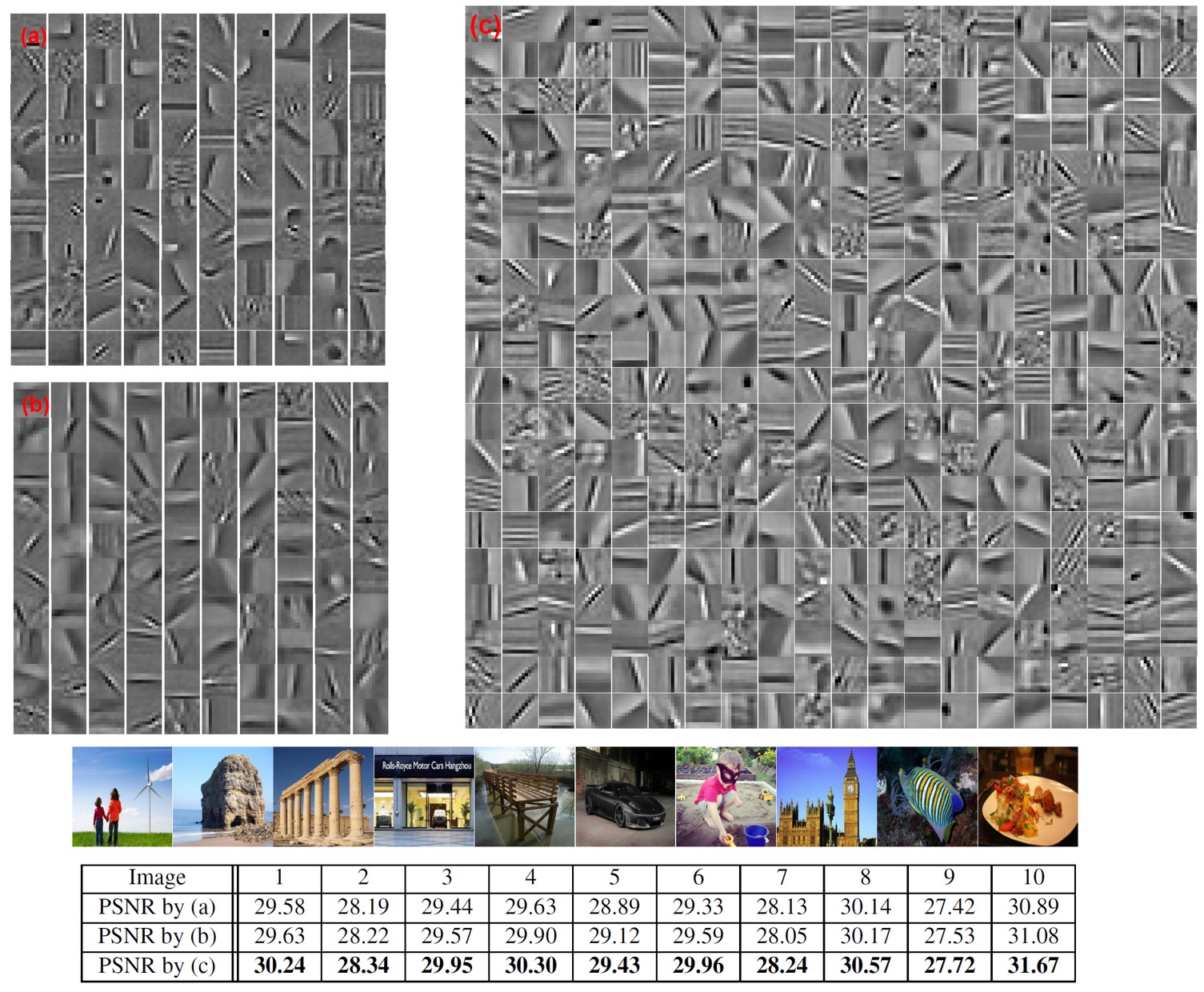

Top: Filters learned from large-scale datasets by our method (SOCSC) and the comparable online method. Bottom: 10 256 X 256 testing images and their corresponding reconstruction quality in the image inpainting application. (a) The under-complete dictionary (11 X 11 X 100) learned by comparison method; (b) The under-complete dictionary (11 X 11 X 100) learned by SOCSC. (c) The over- complete dictionary (11 X 11 X 400) learned by SOCSC. These under-complete dictionaries, mainly composed of Gabor-like filters, can be seen as a subset of the represented over-complete dictionary, which contains a number of extra low contrast image features.

Abstract

State-of-the-art methods for Convolutional Sparse Coding usually employ Fourier-domain solvers in order to speed up the convolution operators. However, this approach is not without shortcomings. For example, Fourier-domain representations im- plicitly assume circular boundary conditions and make it hard to fully exploit the sparsity of the problem as well as the small spatial support of the filters.In this work, we propose a novel stochastic spatial-domain solver, in which a randomized subsampling strategy is introduced during the learning sparse codes. Afterwards, we extend the proposed strategy in conjunction with online learning, scaling the CSC model up to very large sample sizes. In both cases, we show experimentally that the proposed subsampling strategy, with a reasonable selection of the subsampling rate, outperforms the state-of-the-art frequency-domain solvers in terms of execution time without losing the learning quality. Finally, we evaluate the effectiveness of the over-complete dictionary learned from large-scale datasets, which demonstrates an improved sparse representation of the natural images on account of more abundant learned image features.

Paper

paper [Xiong2019StochasticCSC.pdf (5.0MB)]supplement [Xiong2019StochasticCSC_supplement.pdf (2.3MB)]code [https://github.com/vccimaging/Stochastic-Convolutional-Sparse-Coding]